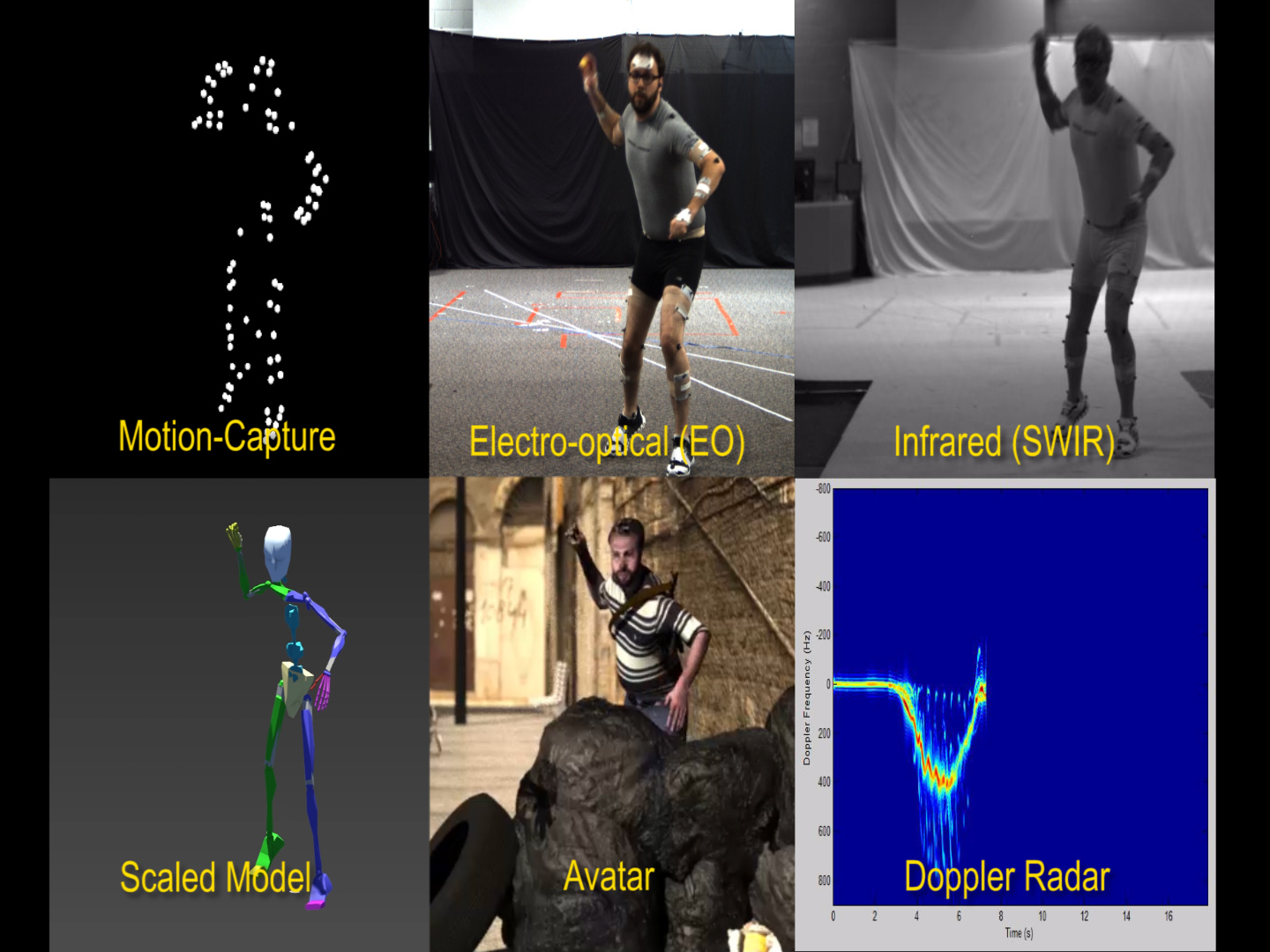

Using data from 3-D cameras and motion capture cameras, researchers can figure out the angle of joints, the intended movement, like crouching, whether a weapon is present, the person’s pace, and even their age. Photo courtesy of the Air Force Research Laboratory.

An AFRL department is working to figure out what a person may be concealing—among other things—just by looking at them.

“We can put a story together of who they are,” said Jennifer Whitestone, a bioengineer with the 711th HPW who’s been working on human body modeling for the better part of three decades. “Gait says a lot.”

Gait—or the way a person walks or moves—is one of the data sets Whitestone’s cameras are able to capture. The two different types of cameras, three-dimensional ones and motion capture ones, give static and dynamic information to researchers. 3-D surface data, what Whitestone calls the “cartography of the body,” is captured in a static sense (though many photos are taken at once). It provides information like stature, leg length, body size, and even gender. Motion capture cameras can determine the angle of joints, an intended movement like crouching, whether a weapon is present, the person’s pace, and even their age (or at least the ability to differentiate an adult from a child and vice versa).

This information can become very useful in a security checkpoint. Currently (and “typically,” Whitestone insisted), human operators look at oncoming human traffic using low-fidelity cameras which are ?themsleves “looking at the video through a window.” Those cameras won’t be replaced by Whitestone’s tech—that’s too expensive. But that’s not the idea. Instead, Whitestone’s tech will analyze what current surveillance systems see and use learned lessons to translate that information into conclusions for the human operator.

“What we’re trying to do is give them assistive devices to help them figure out what may be happening,” Whitestone told Air Force Magazine, referring to servicemembers at checkpoints. But, she added, this open-ended pursuit presents “very attractive research” not just for DOD but more generally to any first responders and non-military checkpoints. Whitestone wasn’t able to share whether any of her research is currently used in deployed materiel.

“We respond to other customers who may be interested in injury assessment or prevention of injury,” she said. For example, the 711th HPW—more specifically, Whitestone’s human detection and characterization team, which is part of the human signatures branch of the HPW—took in data about USAF maintainers, using shape analysis to determine “how we reduce their risk … within a confined space.”

The team has been also working with Bowling Green State University’s School of Human Movement, Sport and Leisure Studies. Through a 2012 educational partnership agreement that’s expected to run at least through 2019 (and maybe longer, considering the agreement was upped in 2015), AFRL provides motion capture and 3-D capture cameras to the school in exchange for extra eyes and ideas toward its own research. For example, the school analyzed soccer kicking and hockey slap shot techniques. That research resulted in understanding a solid difference between the way skilled and unskilled soccer players engage their torso. While that may seem like common sense, specific research of this sort allowed for models of specialized treadmills to assist those unskilled players.

Whitestone considers this collaboration “beneficial” since it’s a solid “cross fertilization of people and ideas.”

“If you bring in a student who doesn’t necessarily like STEM but likes soccer,” she said, “suddenly you have a student who’s ready to join the STEM field.”

Whitestone, who left the Air Force in 1998 to pursue a human modeling company she still heads, returned in 2015, and having seen the evolution of the technology, is excited about where it’s headed. Having quick access to data using small machines—she noted motion capture cameras used to require rooms of computing but now fit into handheld devices— is essential to a safe future.

“We’re in desperate need of that in real-time,” she said.