AURORA, Colo.—Just one year ago, Collaborative Combat Aircraft took center stage as then-Chief of Staff Gen. David W. Allvin designated the two competing jets prototypes as the first unmanned fighters in Air Force history: General Atomics’ YFQ-42A and Anduril Industries’ YFQ-44A.

Twelve months later, it’s the autonomy software that’s flying those aircraft garnering the attention. Autonomy software, more than hardware, may prove the most valuable and enduring element of the CCA program.

Two autonomy software suites are competing, and both have flown aircraft from both General Atomics and Anduril. Indeed, halfway through the Warfare Symposium, Anduril said its YFQ-44A had flown under two systems—one of the competitors and its own system—in a single flight.

With AI the talk of the nation these days, Air Force CCAs are among the most challenging applications in the works. The “AI pilots” that will fly CCAs must be able to safely operate in tandem with crewed fighters and execute missions dictated by their human fighter pilot “quarterbacks.”

Autonomy has been an objective capability since at least 2018, when the Air Force Research Laboratory was running its “Skyborg” experiments, noted Col. Timothy Helfrich, portfolio acquisition executive for fighters and advanced aircraft, during a panel discussion at the Symposium.

Skyborg was software independent of the airframe, but even so, in a world where competitions have long been between competing airframes, industry focus turned to the hardware, not the software.

That has largely remained true as General Atomics and Anduril have battled in the media over preeminence while the Air Force awaits the final decision in what it’s dubbed CCA Increment 1. But just as the Air Force began the hardware competition with five entries before narrowing to two, it likewise awarded contracts to five companies to work on autonomy software. Officials have thus far declined to name all of them.

In February, however, the Air Force finally lifted the lid on its software efforts. A Feb. 12 release revealed that Collins Aerospace had been paired with General Atomics to test Collins’ “Sidekick” autonomy software, while Shield AI and Anduril would test Shield’s “Hivemind” system.

Sidekick and Hivemind are artificial intelligence flight control and management software designed to be fly any host platform, then learn to execute missions as directed.

“We see it as essentially the pilot in the seat,” said Lt. Col. Matthew Jensen, commander of the Air Force’s Experimental Operations Unit, which is developing tactics and procedures for operating autonomous systems and integrating them with manned fighters.

Just as pilots can and do switch aircraft, these AI systems can learn to fly different platforms. Shield AI’s Ben “Billy Ray” Bradley, a retired Air Force pilot, told Air & Space Forces Magazine that the idea that the CCA program has locked its aircraft builders and software providers together into two competing teams isn’t accurate.

“The Air Force has paired us with Anduril for flight, but we also, in simulation, can and do fly on the GA air vehicle,” Bradley said. Hivemind has already flown General Atomics’ MQ-20 Avenger, the aircraft on which the YFQ-42A was based.

“We are required—because the whole purpose is that we are agnostic to any platform—to be able to fly on both platforms in simulation,” Bradley said. “But because you don’t have all the time to go do actual flight tests, [the Air Force] partnered us with one platform to go do flight tests. But it’s not as if we can’t fly on GA’s platform.”

Hivemind has also flown multiple aircraft from drone-maker Kratos, and even a variant of Airbus’ UH-72 Lakota helicopter. It’s also scheduled to fly on Northrop Grumman’s Talon IQ drone, a variant of Northrop’s YFQ-48A Talon Blue CCA contender, though Shield AI declined to say when.

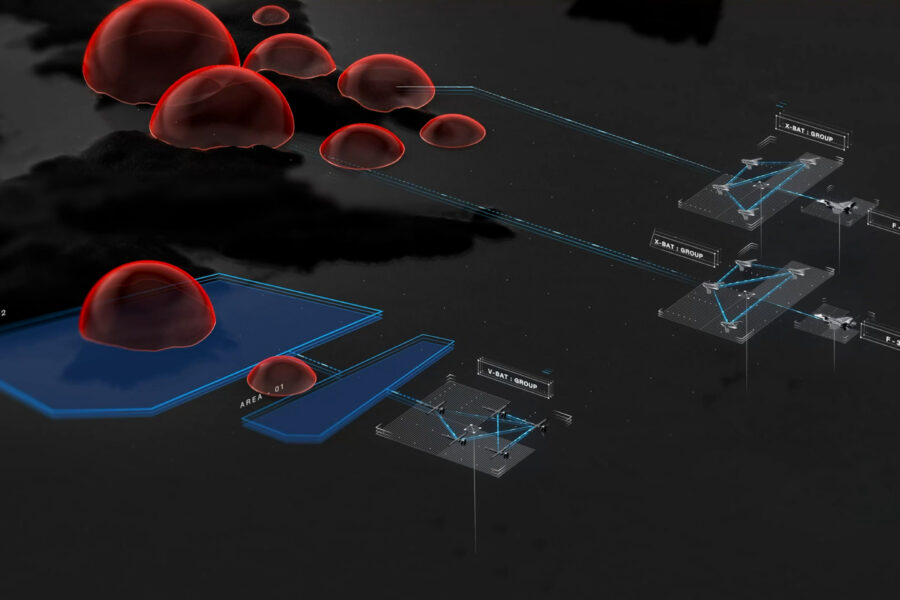

And it is the brains for Shield AI’s own developmental CCA, dubbed X-BAT. One of the most eye-catching displays at the Symposium was a 45 percent scale model of the X-Bat, which is designed to launch vertically from a trailerable launch rig. The model at the symposium came complete with “Powered by Hivemind” emblazoned on the wing.

The full-sized version Shield intends to fly later this year will be about the size of an F-16, powered by a GE F-100 engine, the same powerplant as the F-16.

Collins Aerospace has not been as public as Shield in detailing Sidekick’s flights. But a spokesperson told Air & Space Forces Magazine that Sidekick flew on “three or four” platforms during its classified development.

Sidekick beat Hivemind in the race to fly one of the CCAs first. General Atomics announced Feb. 12 that Sidekick had flown the YFQ-42A earlier in the month, with a human on the ground managing the system.

That put Sidekick perhaps three weeks ahead of Shield AI, since it wasn’t until Feb. 25 that Helfrich revealed at the conference that Hivemind had made its first flight with Anduril’s YFQ-44A.

Both Sidekick and Hivemind “performed as expected,” Helfrich added—which Lt. Col. Matthew Jensen, commander of the Air Force’s Experimental Operations Unit, called a good first step.

“We’re probably treating it much like we would a student pilot: ‘Hey, let’s get through the basics: Can you stay in your space? Can you fly? Can you avoid other aircraft?’” Jensen said. “And then as we build confidence in that, we’ll start to add more and more capability, more and more challenges to it. And the goal is that eventually you have a transferable capability that is as good as your high-end, highly proficient fighter pilot.”

There was one wrinkle on the YFQ-44A flight, Helfrich noted, that demonstrated an important point about these new systems: “What we did was we flew one mission autonomy [from] Shield AI, and then in the same flight, without landing, we went and pivoted to a second mission autonomy, same flight.”

In essence, the drone had switched pilots midflight. The second pilot was Anduril’s “Lattice” AI flight software, and the company reported it executed the same test points as Hivemind.

Anduril was able to execute that midflight switch for the same reason Collins and Shield AI have been able to move their AI pilots between different aircraft: the Autonomy-Government Reference Architecture.

The A-GRA is a “baseline” of autonomy owned by the U.S. government. Every A-GRA-compliant aircraft must have flight autonomy software: “the parts that are highly coupled with your flight and safety critical software, so just the basic things that make sure that the aircraft flies and it’s safe,” Helfrich said. And all A-GRA-compliant mission autonomy software must be able to connect to the aircraft’s flight autonomy software. When a human operator gives the basic direction for a CCA to take flight, the mission autonomy software is the pilot that makes the flight software takeoff.

A-GRA underpins the Air Force’s CCA program. Without it, the AI pilots would have to be customized to each aircraft, creating an extensive additional layer of programming. By standardizing the interface between the AI pilot and the internal flight system, the AI pilots can become interchangeable.

Bradley said it takes just weeks to integrate the AI pilot can be integrated with a new platform, and Shield AI is getting faster and faster every time it puts Hivemind onto a new platform.

Decoupling the airframe from the AI pilot means the Air Force will have not one but two decisions to make as it narrows the field of competitors in Increment 1 later this year. With two aircraft and two pilot systems, there are four possible combinations to choose from.

Anduril’s midflight switch of mission autonomy software highlighted another aspect of the Air Force’s CCA program, one also demonstrated by the service’s surprise designation of Northrop’s YFQ-48A in December: As Gen. Dale R. White, the new czar for the Air Force’s top programs, including CCA, said: “If you’re eliminated from a competition, the door never remains closed.”

“You can continue to develop,” White said. “You may not get funded by the government, but at the end, if you think that you’re going to produce a better product, … you can… come back and still compete.” Anduril, for example, was never confirmed as being among the five companies competing for the CCA autonomy software contract, but in a blog post, the firm’s senior vice president for engineering Jason Levin hinted that it had taken White’s advice and self-funded development of its AI Lattice pilot.

Operations Development

Even before the Air Force narrows to a chosen airframe and AI pilot, the service is standing up an experimental team to develop operational concepts, tactics, and procedures for how CCAs will be used in practice.

Helfrich, in fact, said “the most important thing” his team will to advance CCAs this year is not the down-select decision, but rather to put CCAs in the hands of Jensen’s Experimental Operations Unit this coming summer.

Putting CCAs under the operational control of Airmen is not testing, but experimenting, figuring out how the autonomy works and orienting the humans to work effectively with the AI pilots.

For the AI itself, this phase will be about finding the limits of the systems and injecting rapid updates to improve them. Helfrich described the starting point as fielding a “minimum viable product,” a common term in the tech world, as the first step to developing improvements.

“We’re setting up the structure of the program to be able to respond and integrate with the operator every single day,” he said. “And so what you get on Day One, that’s just the first step. You’re going to keep getting better and better.”

The A-GRA is key to that. Shield AI and Collins can update their AI pilots without worrying about the safety of the aircraft itself, because the aircraft’s flight software is already settled and the interfaces are standard.

Potential updates will address weaknesses in the AI pilot’s performance, enabling it to improve. Jensen noted that while the A-GRA sets a baseline, the systems are neither the same nor completely equal.

Like people, the AI pilots can improve, and so will the human pilots working with them, predicted Brig. Gen. David C. Epperson, commander of the U.S. Air Force Warfare Center.

“That’s the crux of having the EOU and getting platforms actually in their hands, so that we can start to get sets and reps,” Epperson said. “And what we’re going to see over time as these [vendors] change their mission autonomy [and] as we advance the program, … we’re going to understand better how those changes matter to both the CCA, [and] the quarterback and how we train up that aircrew as we move forward.”

The entire effort is about building trust between the operators and the AI pilots, just as it is with building a team in a squadron.

“We actually have to deploy this in a way that allows our human brain to stay wrapped around the decision space, so that I can intuitively assess the inputs and the outputs,” said Lt. Gen. Luke C.G. Cropsey, military deputy to the Assistant Secretary of the Air Force for Acquisition, Technology, and Logistics.

In other words, if the human pilot cannot understand why the AI pilot made the decision it did, Airmen won’t have the necessary confidence to trust the AI operationally. The AI has to react rationally to the human pilot’s instructions.

Jensen said his experimental unit embraces that perspective, and said he intends to have human pilots “debrief” with the autonomy software just like they currently do with each other after each sortie.

“I want to be able to talk to a large language model and explain what the autonomy did at a certain time and certain place, and [ask it to] provide the reasons why it did it,” he said.

Conceptually, that’s reasonable, but it’s hard to debrief a machine. Such a capability is still in development, but Helfrich and Jensen both said it’s critical to start building trust and familiarity now, rather than waiting to have every aspect of the AI pilot refined and trained to perfection first.

Jensen also said he plans to bring CCAs to major exercises “as soon as we can,” which may be unsettling to some.

“[That] will probably surprise some people when they show up to Red Flag and they’re like, ‘Why are the robots flying with us?’” Jensen said. “But you know, we’ll drive it and see what happens.”

The robots are coming, and it’s humans who will teach them—take them to the fight.